Data at Depth 16: Substack consistency, Investing in My Writing, Learning Streamlit with GPT-4 Code Tutor

Substack keeps growing, learning from those better than me, a useful GPT-4 tool

Today, a few topics:

My Data at Depth: documenting my own creator journey - extensive data analysis across platforms for the past 2 weeks - Substack is still growing at an awesome rate, Medium has, increased number son Linked In, decreased numbers on reddit, X.

Thinking and Creating: Some insight into what I have been creating and doing during the past 2 weeks (signing up for expert advice, off to receive family advice)

Doing - Tutorial: Leveraging Khan Academy’s GPT-4 Code Tutor GPT to learn Streamlit. Examples of how this tool can help you put together streamlit data visualization code from the global happiness dataset.

1. My Data at Depth - The Raw Numbers

It’s been a reasonable two weeks. I have been putting the time I have into Substack and I have managed to maintain all my momentum!

My Substack:

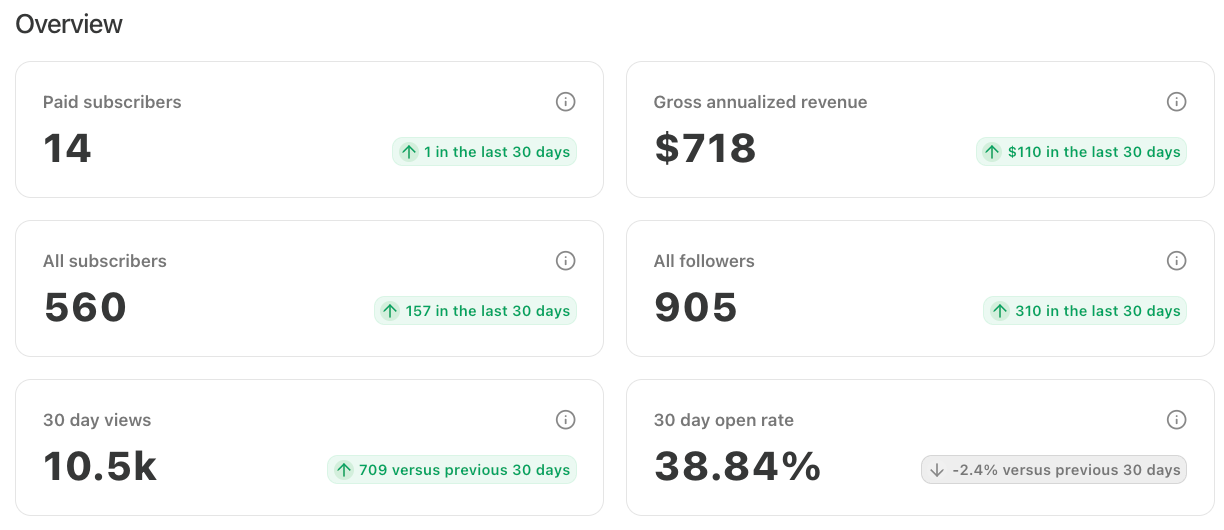

These past 2 weeks I have kept it all moving forward at the same speed. I am really happy about this. I have had 83 new subscribers from the previous week. This makes it my best 2 weeks ever! And 2 new paid subscribers! Thank you so much to you both. I am over the moon about all this.

My (growing) numbers:

This is just awesome. My overall reads are slightly down (from 11K) but I am overall very satisfied with how things are progressing.

My Medium:

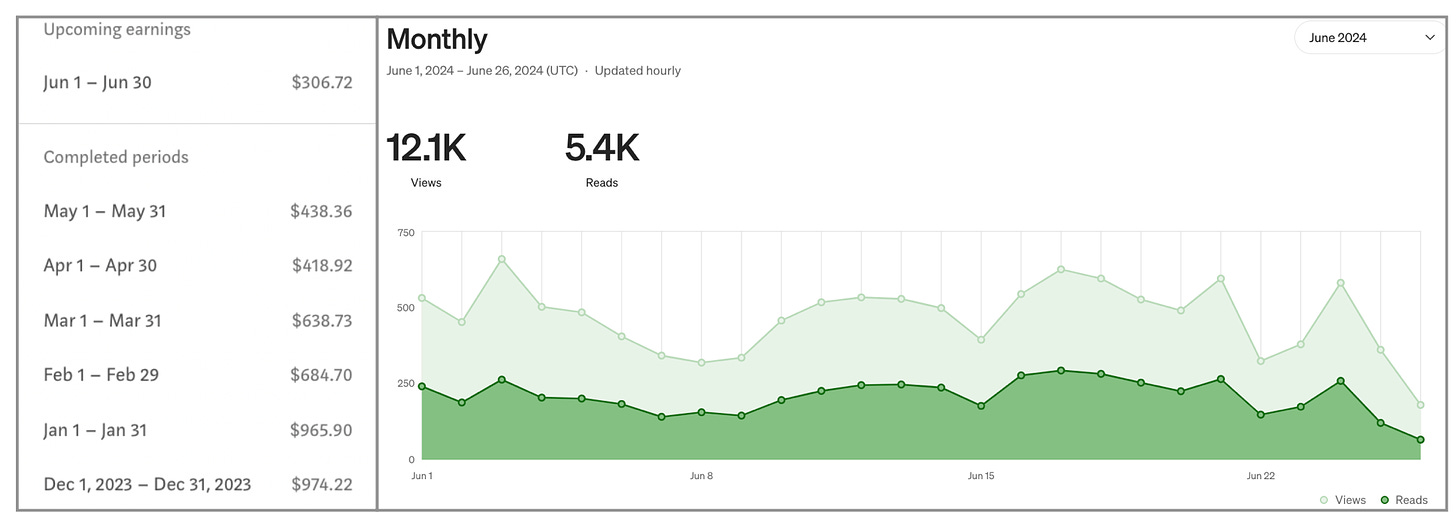

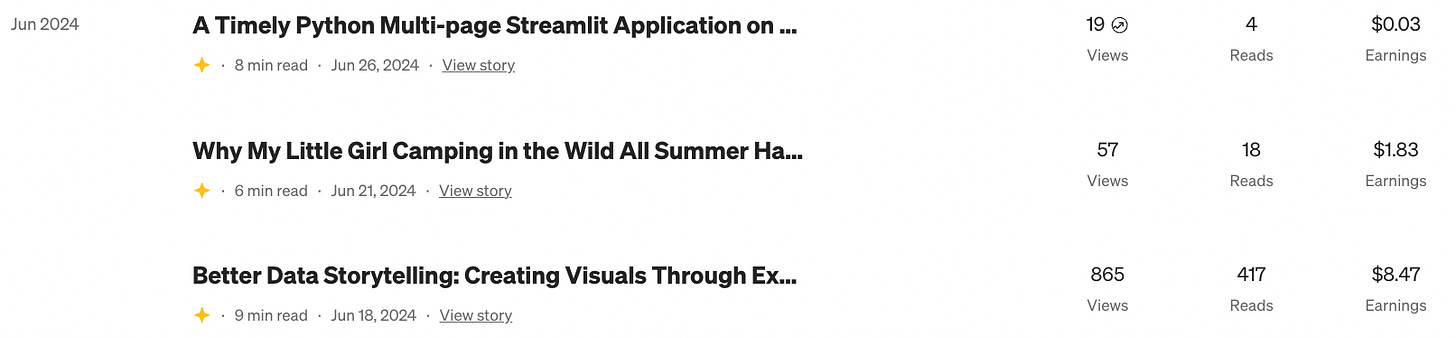

My Medium stats for the past 2 weeks have been down. With a focus on quality articles on Substack, I have only published 6 articles so far in the entire month of June. So far for June:

Right now I am slightly below my pace for the previous two months..Considering the low number of articles, I am actually doing better than I expected. With the minimum amount of time spent here, it really does feel like free money. AND, just today (literally an hour ago), I received a Medium Boost on my most recent article (more below). This will most likely drive me up over the $400 mark again.

My LinkedIn:

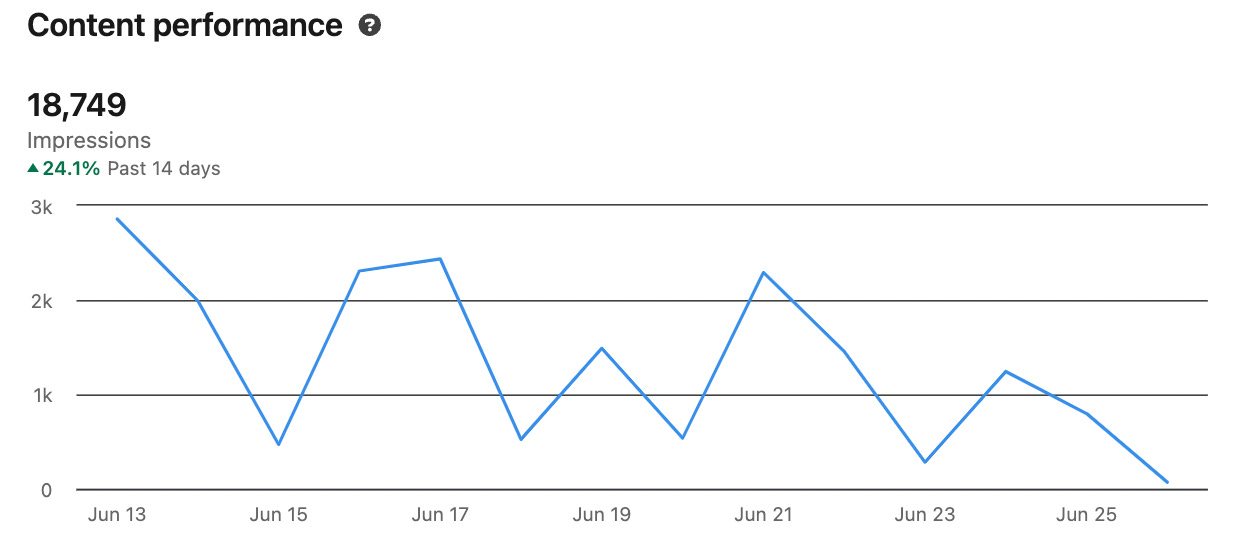

My past 2 weeks on LinkedIn were above average. I had a good number of impressions from the 4 articles that I advertised here from Substack.

This is over 5000 more impressions than my previous 2 weeks.

It is the Python Web Developers group on LinkedIn that still gets me the most action, although I had one “general” post go northwards of 1500 impressions as well. .

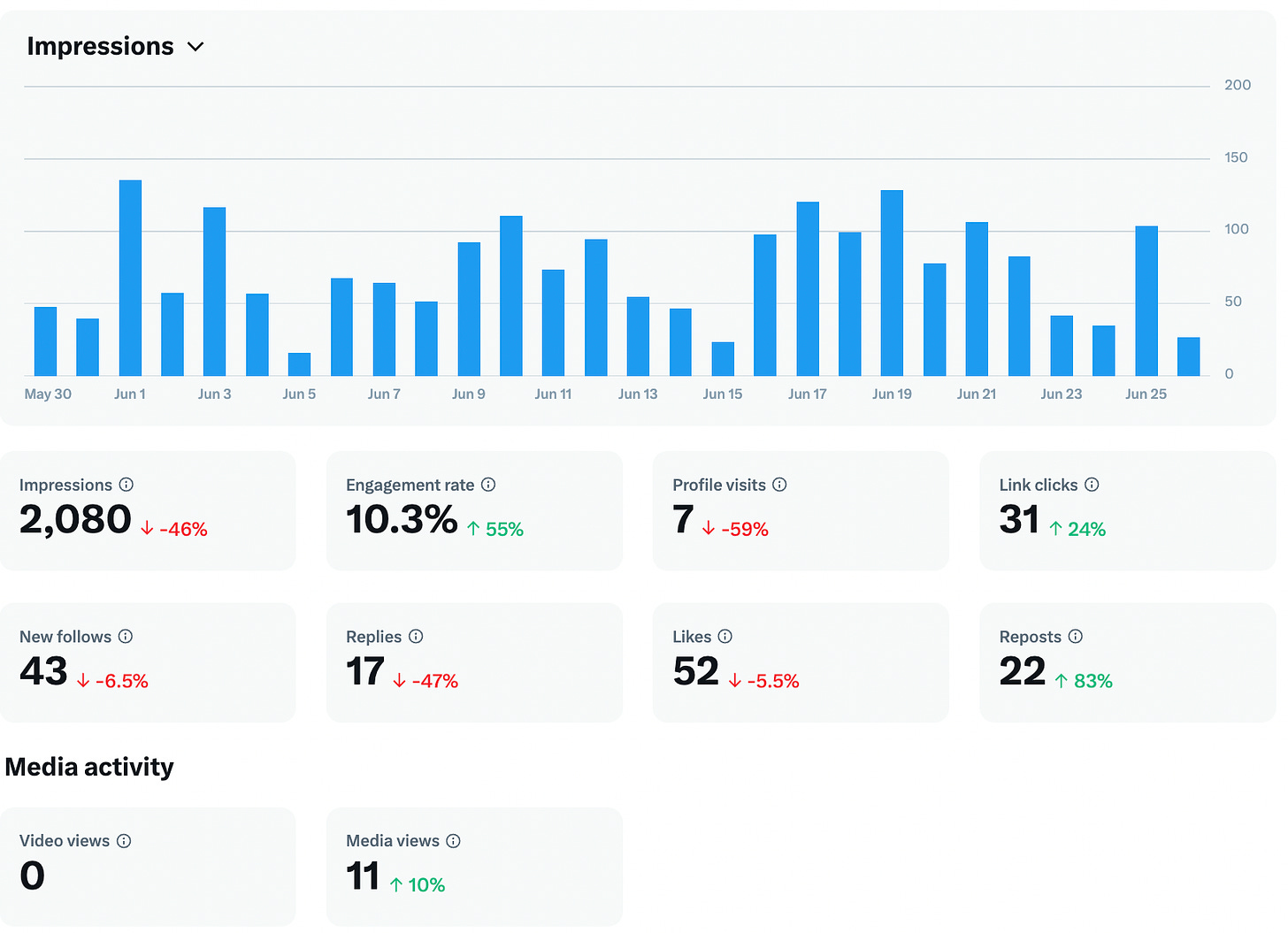

Twitter/X:

Over the past 2 weeks X has been, well, X. AsI had a number of larger accounts follow me in the past 2 weeks - folks who have upwards of 20K followers I have decided to stick with it for now. This has piqued my attention. Overall for June:

I’ve had over 1000 new impressions since my last newsletter and my engagement rate has gone up slightly. This is with even less effort than before. I am now posting the same write-up as I do for LinkedIn and am providing the link to the Substack article in the comments.

Still awful numbers, but for the amount of time that I spend on X, this is no surprise. I am willing to keep doing what I am doing. I paid for a year, so I will keep posting here.

Reddit:

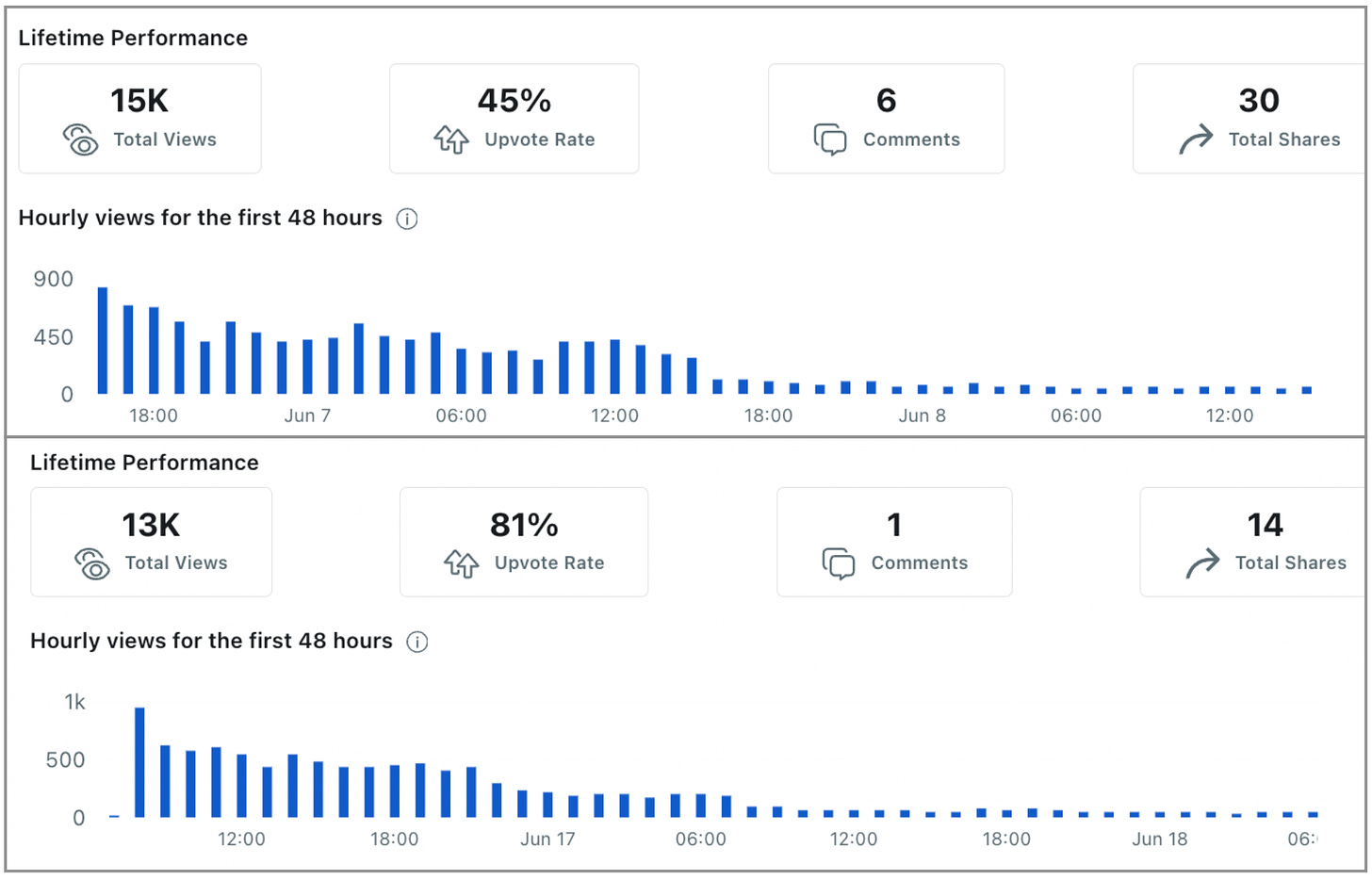

My numbers on reddit have definitely been down My last 2 articles posted there have garnered 15K and 13K respectively.

This is down significantly since the previous two (over 45K each). I think it had partly to do with the time of day that I posted the two articles. I will experiment with posting at a later time for my next article. I have been posting the occasional article on Reddit to see if there is any interest there. I carefully post during the times allowed for tutorials. And I always post as a free article. I had one article do very well in this past 2 weeks:

The two articles respectively were:

How To Create Professional Python Shiny Dashboards In A Jiffy

A Timely Python Multi-page Streamlit Application on Olympic Medal Winning Countries

I will let you know how it goes with my next article post at the time that my previous articles did so well.

2. Thinking, Creating, and Doing

As I mentioned in my last full update, these two weeks for me have been incredibly busy with all of the end-of-school celebrations and packing.

Here in Rome, my daughter is moving up to high school next year. And as of right now, with the automated release of this most recent newsletter, I am actually somewhere over the Atlantic on a flight from Rome to New York.

My wife and I are dropping our daughter off at summer camp. She will be spending 7 weeks in a tent in upstate New York.

With all of this crazy commotion going on, I have only managed to publish 3 articles on Medium in this 2-week period:

But, if you look closely at the latest article, I just received a Boost on it within the last hour! Like any Boost on Medium, I am thrilled and excited about this.

My experiences with Boosts lately are that I will hopefully make between $75 and $200 after the increased views. Something different about this boost though is that I have never had an article Boosted in the Level Up Coding publication. It has over 100K followers so maybe this article will do a bit better than previous ones..

One of the other articles I wrote is about my daughter’s experience at camp last year and how it will be different this year.

If you’re interested, it is posted in my other Substack:

Once we drop our daughter off, I will be heading out to the wild northwest coast of Canada to visit with my family. I am anticipating that I will have lots of time to sit and think, and to write.

My most successful article published on Medium this month (by far) is my book review on Nathan Yau’s new version of Visualize This. It has already made me over $30 with 1500 views and 1000 reads. All this without receiving a Boost.

And as of today, I do have 2 more articles lined up in the publishing queue - which is a nice feeling.

As I have fewer commitments work-wise over the summer, I am confident that I can increase my production over what it has been recently.

The other big commitment I made this past 2 weeks was to sign up for a year paid membership to Walter Rhein’s Empowering Progressive Writers Substack.

Walter is a Medium Boost writing machine. He consistently writes article after article that receives this coveted Medium Boost and he has generously helped me with some tips, advice, and editing on some of the writing that I have been putting together recently.

I will keep you posted on how these articles fare.

So for the next two weeks my focus will be:

Continued outreach and engagement on Substack (of course!).

4 technical articles (2 in Programming, 2 in AI) published on Medium

Creating a book chapter every 2 weeks (this one has recently fallen through the cracks)

Idea generation - I have been making progress here.

For #4, I am sure a trip home will give me all the ideas I need. Many of my family members are artists/writers so I always find inspiration talking with them.

3. Tutorial - Khan Academy’s GPT-4 Code Tutor

Productivity is an important metric that differentiates between those who are great coders and those who are merely good.

You want to be great? This takes not only dedication but also smarts. A smart way to work towards great is to take advantage of the tools that are available.

To optimize your initial development as a Streamlit coder you can use Khan Academy’s Code Tutor.

This tool differs in it’s approach by providing:

Prompted User Engagement and Understanding

incremental step-by-step instructions

Check in after each step to engage user on their level of understanding

Here’s how I utilize Code Tutor to accelerate my Python streamlit coding skills.

Accessing Khan Academy’s Code Tutor

The Code Tutor GPT is available for anyone who has a GPT-4 license.

You can access the GPT by clicking HERE.

You must sign in to ChatGPT Plus to use this particular GPT. This is the way forward with most GPTs created by private companies like Khan Academy.

Once you are logged in with your ChatGPT Plus account, you can start prompting away.

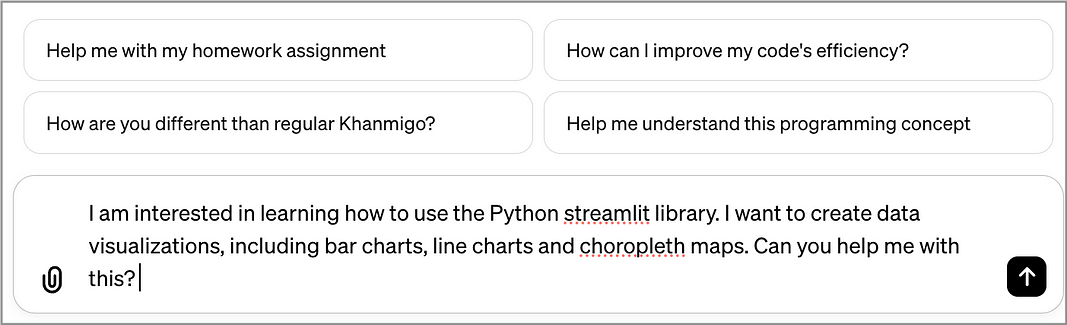

Now if I am a decent coder but I am not an expert at streamlit —my goal may be to create data visualizations with streamlit. Specifically, how about an interactive dashboard with streamlit from a CSV file, AND a global choropleth (heat) map in the dashboard.

I am hoping Code Tutor can help me with this.

Where do we start? Let’s prompt Code Tutor to find out:

Code Tutor’s Response:

Code Tutor informs me that it can help me with:

Getting Started with Streamlit — do you need guidance on setting it up on your computer?

Understanding the Basics — are you familiar with the basic structure of a streamlit app, including how to run it?

Creating Data Visualizations — do you have a specific dataset in mind that you’d like to visualize, or would you like an example to start with?

Choropleth Maps: For choropleth maps, we can use plotly. Do you have spatial data you’re looking to visualize, or should we work with sample data?

Wow, OK, this hits all of the main points for me. So why not start from the beginning?

Setting up the Code Tutor Learning Environment

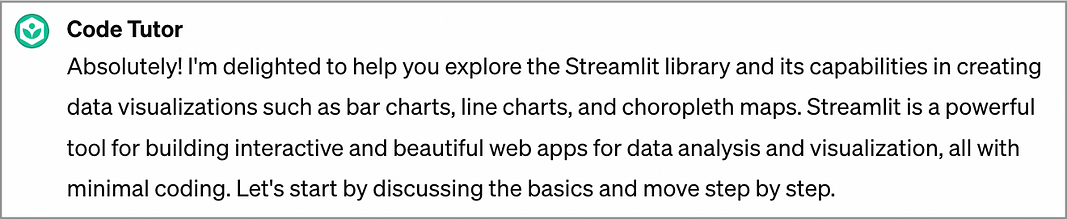

With my extensive experience on using various GPTs, I know that I can upload a CSV file here and ask the GPT to take a look at it. And then I can use the CSV as the data source for all of the streamlit examples.

This is a much better option than asking the GPT to create sample data for me. The data GPT generates is almost always senseless garbage. As a Comp Sci prof, I always ask my students to give me meaningful data in their assignments. Let’s do the same.

My Sample Dataset

For data visuals that include choropleth maps, a global dataset that spans multiple years is needed. There is lots of great data from the United Nations and other development agencies that work well.

For this exercise, let’s use the global happiness index (GHI) downloaded HERE.

This version of the GHI contains global values from 2015–2022. This works great as a learning dataset. I have country data over time and from this, I can create time-series visualizations AND maps.

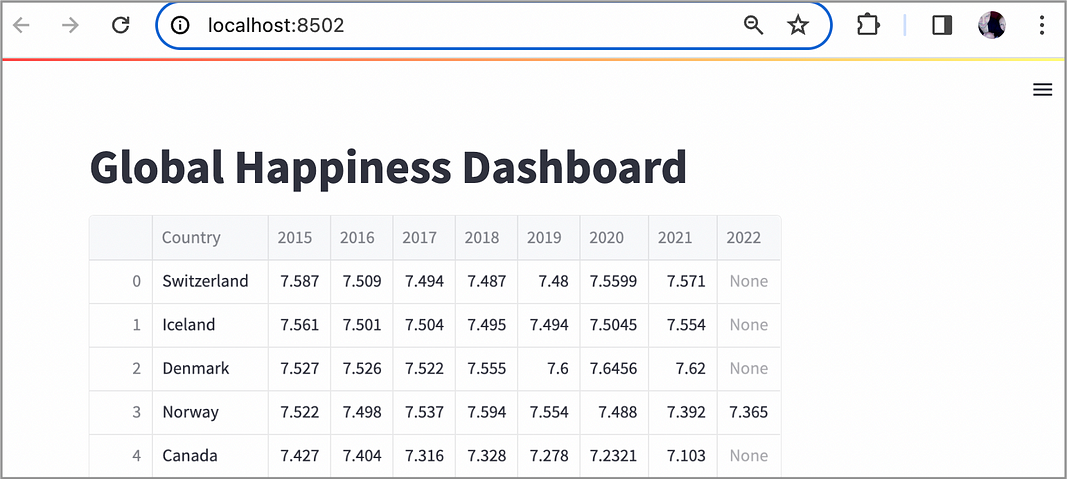

Once I have this dataset downloaded (I saved it as global_happiness.csv), I can then go into the Code Tutor window and upload the file for analysis:

OK, this looks accurate to me. I can see a country name followed by 8 years of data, each in its own column with the year as the column name.

We’re all ready to get on with it!

Learning Streamlit With Code Tutor

For this exercise, let’s follow ALL the steps outlined by Code Tutor to ensure maximum learning.

Now, knowing that Code Tutor has a solid grasp of the data set, we can prompt it to use it for all examples (which are very important).

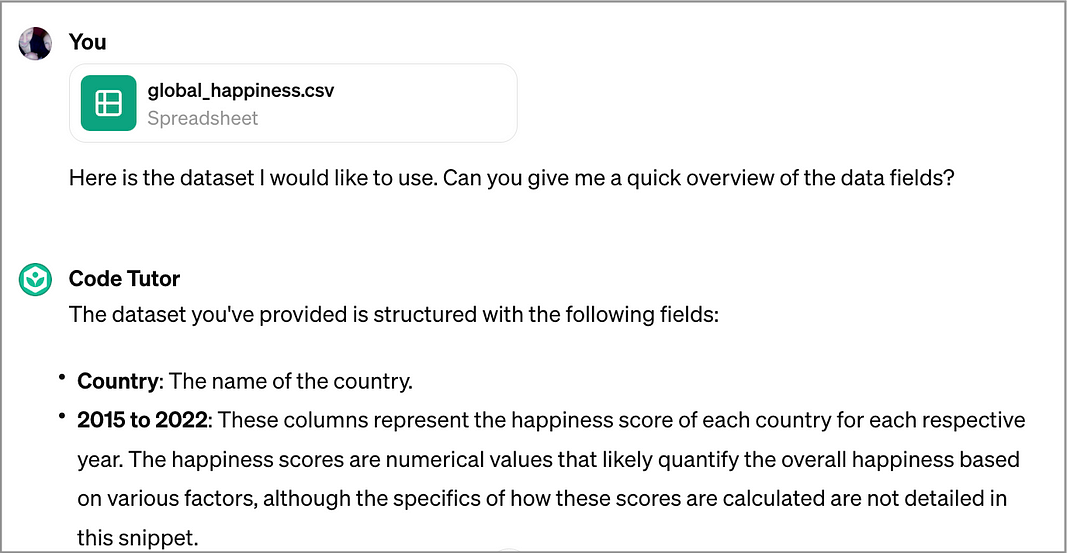

Prompt to Code Tutor: OK, great. I would like to use this data set for ALL relevant examples. And I would like to start from the very beginning with how to install streamlit and how to create a very basic streamlit app.

Response from Code Tutor: Fantastic! Starting from the very beginning is a great way to ensure you have a solid foundation. Let's begin with the installation of streamlit and then create a basic streamlit app.

Wow, this tool is very enthusiastic. I wouldn’t expect anything less from Khan Academy. But can it do the job? Here’s Code Tutor’s response:

Step 1. Installing streamlit

To install streamlit, from a command or terminal prompt:

pip install streamlitStep 2: Creating a Basic Streamlit App

Here’s how to create a basic streamlit app:

Create a Python file for your app: Open your text editor or IDE, create a new Python file named

app.py(or any other name you prefer), and open it.Write a simple streamlit app: Copy the following basic streamlit code into your

app.pyfile. This code will display a simple message on your app.

import streamlit as st

# Title of the app

st.title('Hello Streamlit!')

# Display a simple message

st.write('This is my first Streamlit app.')

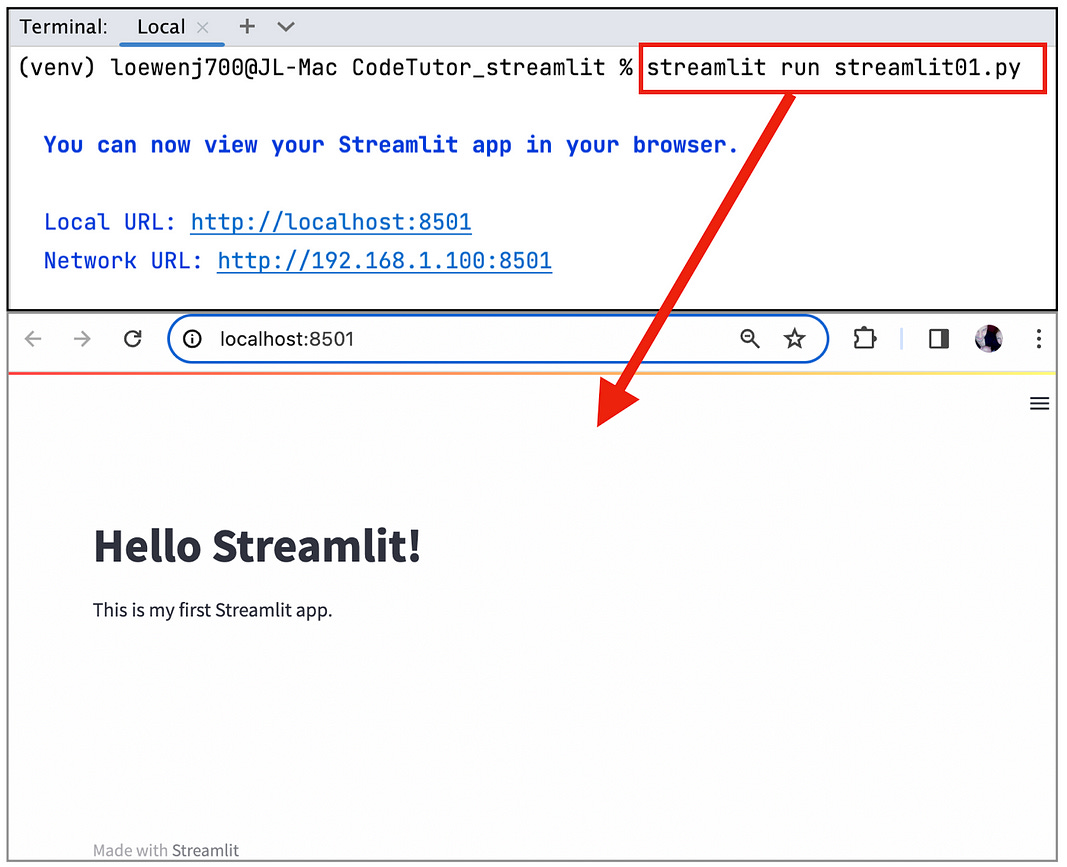

3. Run your Streamlit app: Save your app.py file. Then, open your terminal or command prompt, navigate to the directory where your app.py file is saved, and run the following command:

streamlit run app.pyStreamlit will start your app in your default web browser at the URL shown in your terminal (usually something like http://localhost:8501).

This all looks really good so far! The next step is to copy and paste this code into our development environment. Now you can use whichever IDE or editor you are most familiar with.

Personally, I have been a PyCharm user for many years now, so I am going to create a new project in PyCharm to run this streamlit code.

If you want to use it as well, you can download the community (free) version of PyCharm HERE. (scroll down to the Community version).

Running the Streamlit App in My IDE (PyCharm)

In PyCharm, we can start fresh with a new Project (CodeTutor_streamlit) and a new file (File/New/Python File).

Next, we copy the code from the Code Tutor window and paste it into the new file and clicked File/Save.

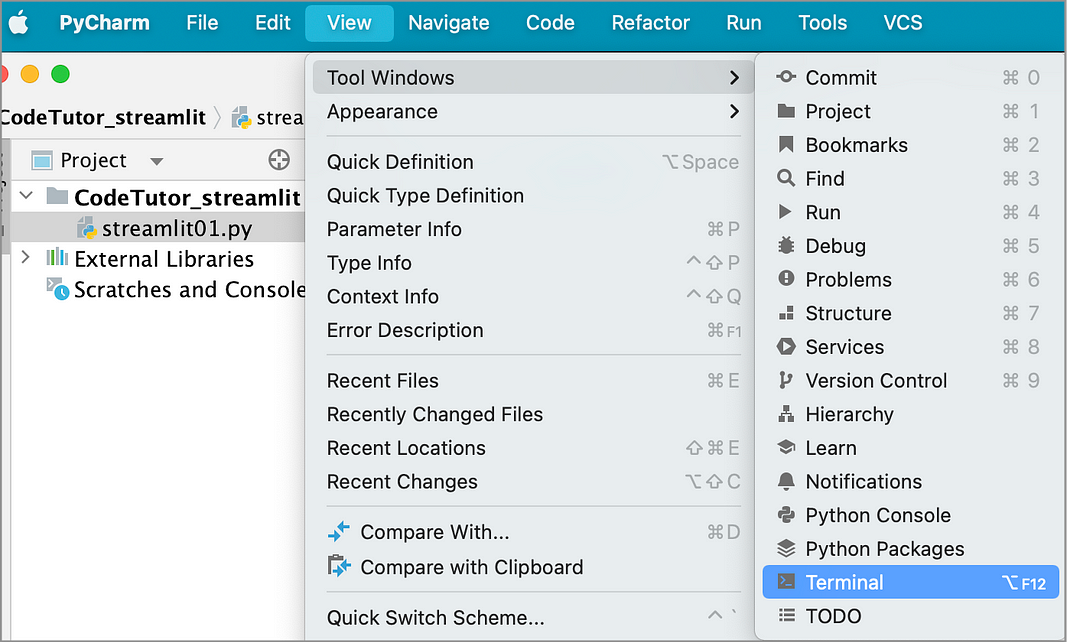

Next, we can run it! Following the instructions from Code Tutor, we can run the command from a Terminal prompt (I am using a Mac).

One of the great features of PyCharm is the ability to run streamlit apps directly from the IDE.

How, you ask? Like so:

We can now go to the Terminal window and type the streamlit command there:

And presto, our (very simple) streamlit app is created!

So far, great job, Code Tutor! Thank you.

Now on to creating the cool stuff — the data visualizations!

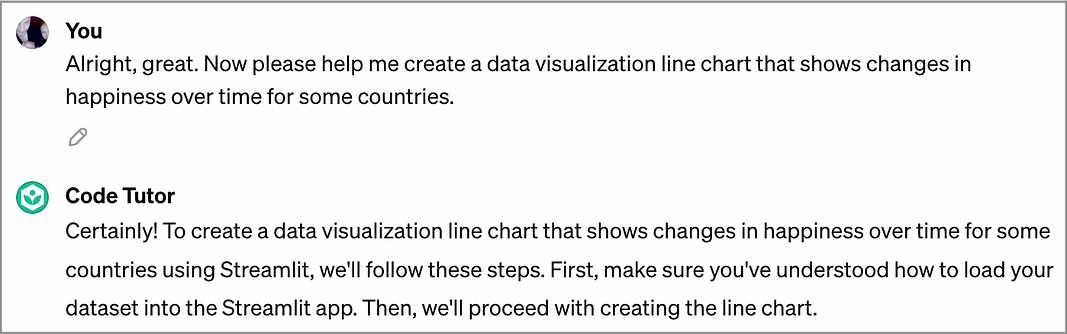

Creating Streamlit Data Visuals With Code Tutor

This is where we really need a Code Tutor to help us out. Personally, I have no idea on how to create data visualizations with the streamlit library.

We can start with a line chart that compares a few countries over the years — to see which countries may be happier, or less happy, over the range of years in the dataset.

It looks like Code Tutor has an understanding of what we want to do. Code Tutor steps us through how to access the CSV file:

import streamlit as st

import pandas as pd

# Title of the app

st.title('Global Happiness Dashboard')

# Load the dataset

data_path = 'global_happiness.csv' # Make sure the path is correct

data = pd.read_csv(data_path)

# Display the dataset

st.write(data)I really like that Code Tutor stops here and allows me to test the code — to see what it does, and to ensure that it is working. The code access the CSV file and stores it in a pandas data frame. The pandas library is the most commonly used (and best) Python library for storing a dataset. And it works great with streamlit.

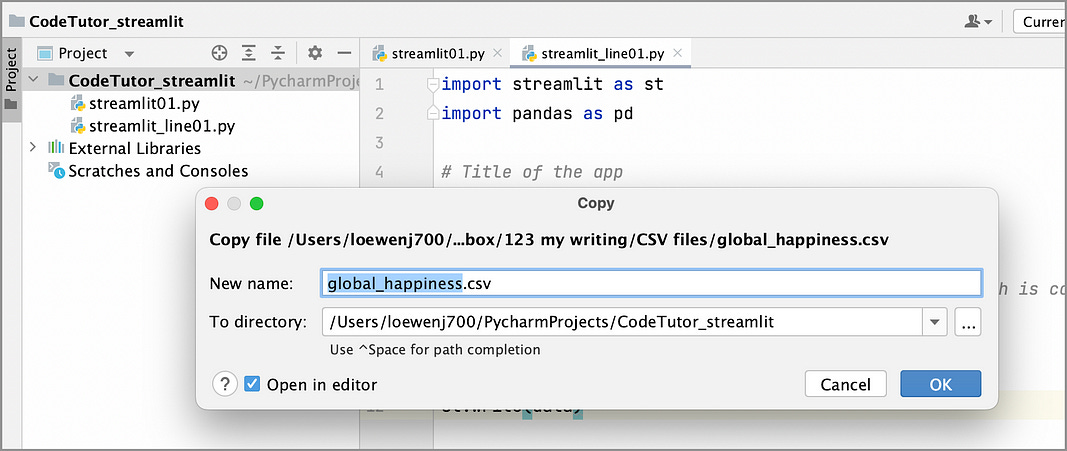

To ensure that this code snippet works, perform a Copy/Paste of the CSV file to include it in the project:

Great! Now we can test the code to see if it works. The result of st.write(data):

We can see a table of data with rows and columns that are organized by Country and by each individual year. Looks great.

The second snippet of code provided by Code Tutor:

# Assuming 'Country' is set as the index if not already

data.set_index('Country', inplace=True)

# Select a subset of countries for visualization

countries_to_visualize = ['Switzerland', 'Iceland', 'Denmark', 'Canada']

# Filter the dataset for the selected countries

filtered_data = data.loc[countries_to_visualize]

# Transpose the dataframe to have years as the x-axis and happiness scores as the y-axis

transposed_data = filtered_data.transpose()

# Create a line chart

st.line_chart(transposed_data)We can Copy this code and Paste it to the end of the previous snippet. And the results:

Awesome! This is going so well.

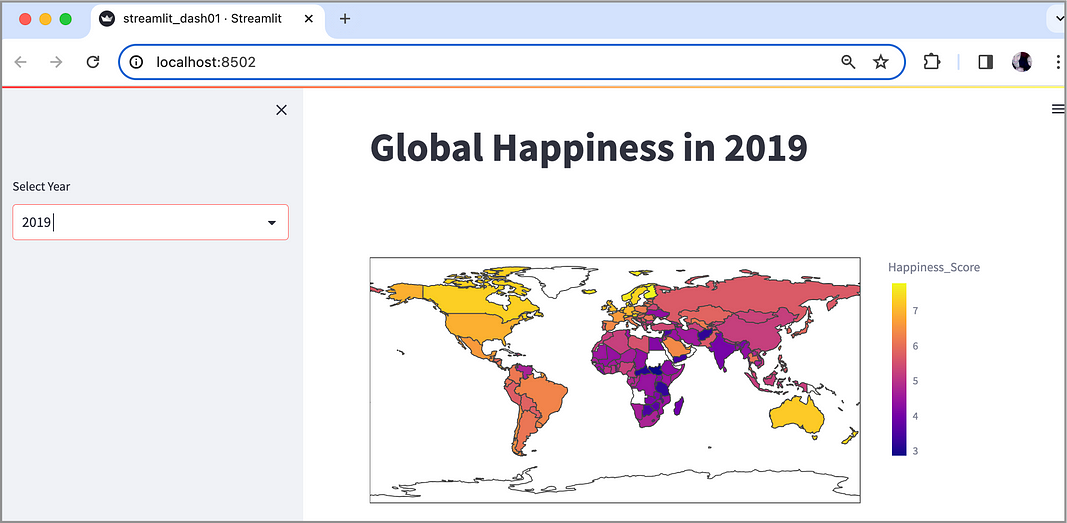

Now let’s create a choropleth map — as a dashboard where the user can select the year and the map will display accordingly. To prompt Code Tutor to help us:

Prompt to Code Tutor: Please step me through how to create a streamlit dashboard that allows the user to select a year that displays a global choropleth map for that year.

Response from Code Tutor: Creating a Streamlit dashboard that allows the user to select a year and then displays a global choropleth map for that year involves a few steps.

Code Tutor Step-by-Step Guide to Choropleth Map

Ensure Plotly is installed: If you haven’t installed Plotly yet, please do so.

Modify the Streamlit App: Here’s how you can integrate the selection and mapping features into your app using your dataset.

Import Libraries:

import streamlit as st

import pandas as pd

import plotly.express as pxCode Tutor notes the addition of the plotly library here: the plotly library is needed in order to create the choropleth map.

Load the Dataset:

data_path = 'global_happiness.csv' # Adjust path as necessary

data = pd.read_csv(data_path)Add a Year Selector:

year = st.sidebar.selectbox('Select Year', options=[str(y) for y in range(2015, 2023)])Code Tutor notes that it is: adding a sidebar selector for the year.

Prepare Data for the Selected Year (dropdown):

data_for_map = pd.DataFrame({

'Country': data['Country'],

'Happiness_Score': data[year]

})Code Tutor notes that it is: creating a new DataFrame that includes just the country names and the happiness scores for the selected year

Create and Display the Choropleth Map:

fig = px.choropleth(data_for_map,

locations="Country",

locationmode="country names",

color="Happiness_Score",

hover_name="Country",

color_continuous_scale=px.colors.sequential.Plasma)

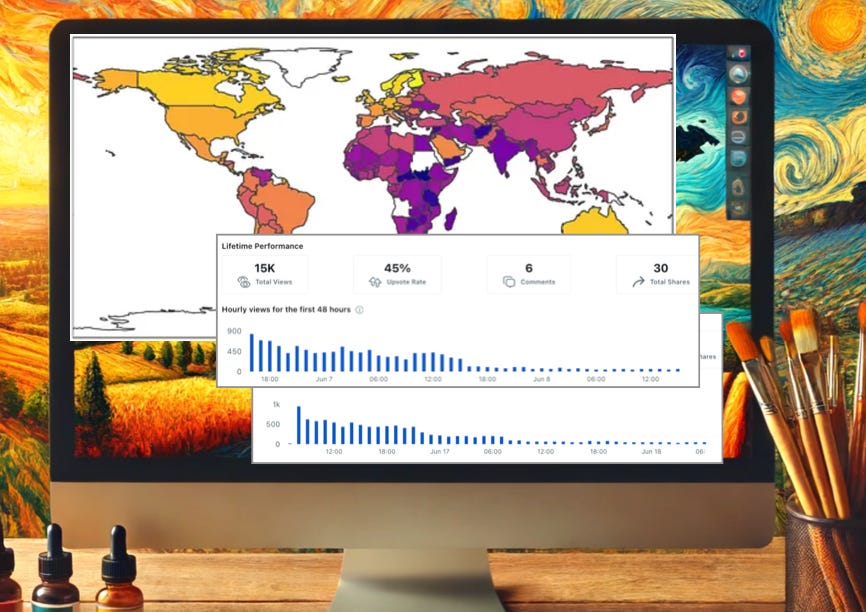

st.plotly_chart(fig)And if we Copy/Paste all this code into a new file (streamlit_dash01.py) and run it from the terminal prompt, the result:

Fantastic. The user can choose the year on the left-hand side, and the map is updated on the right-hand side.

We have managed to go from having little to no streamlit knowledge to creating a data visualization dashboard in less than an hour.

I would highly recommend that you try the Code Tutor tool.

An important note: In my extensive experience with using GPT-4 tools to access a CSV file, the key to success is to ensure that you hold the GPT’s hand tightly by continuous prompting that it must use the CSV file for all examples. If you do not, you run the risk of wasting time and extra prompting when forgets (and it usually does).

In Summary…

For this type of learning task, working through a step-by-step tutorial, the Code Tutor GPT works fantastic.

It went through each step that I asked for in detail, and it did not lose its place — not once. This is impressive.

The difference between Code Tutor and the generic GPT-4 chat window is that Code Tutor provides very detailed step-by-step instructions with lots of checkpoints to ensure that you understand each concept.

Code Tutor breaks the learning down into smaller, bite-sized chunks. For a beginner (as I am with streamlit), this is a terrific feature.

Download your favorite data set and give it a go.

And that’s all for this newsletter, folks.

See you all again soon!