Data at Depth 15: Sustained Growth, Part-Time Reality, Gold Medal Maps With GPT-4

My Substack is growing fast, the realities of the part-time creator, GPT-4 for the gold

Today, a few topics:

My Data at Depth: documenting my own creator journey - extensive data analysis across platforms for the past 2 weeks - Substack is sustaining, Medium is running at medium pace, a few good posts on Linked In, reddit, and X is still alive!

Thinking and Creating: Some insight into what I have been creating and doing during the past 2 weeks (pre-summer responsibilities, idea generation)

Doing - Tutorial: Medal-worthy historical Summer Olympic data visuals with modular prompting. A simple global choropleth map with GPT-4.

Let’s get to it!

1. My Data at Depth - My Raw Stats

It’s been a good two weeks. I am making progress and slowly growing across multiple platforms.

My Substack:

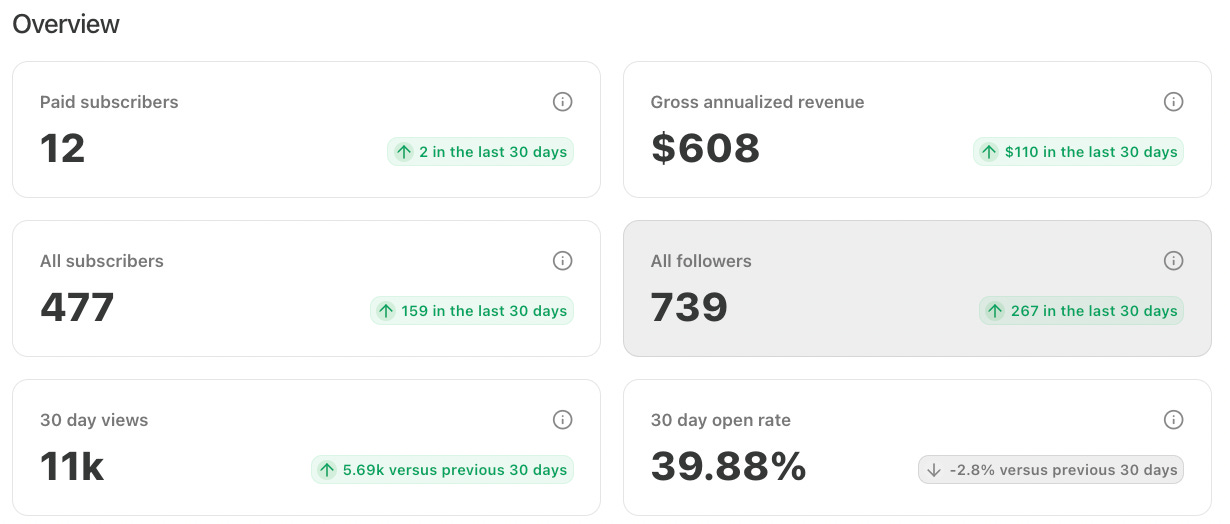

These past 2 weeks I have maintained the momentum from the previous two weeks - 75 new subscribers in 14 days. That’s just a 6 below my all-time best of 81. A Thank you so much for supporting my writing!

And again, with this increase in subscribers, I have seen an even higher number of views - 11,000 for the past 30 says. This is an all time record for this newsletter.

My (growing) numbers:

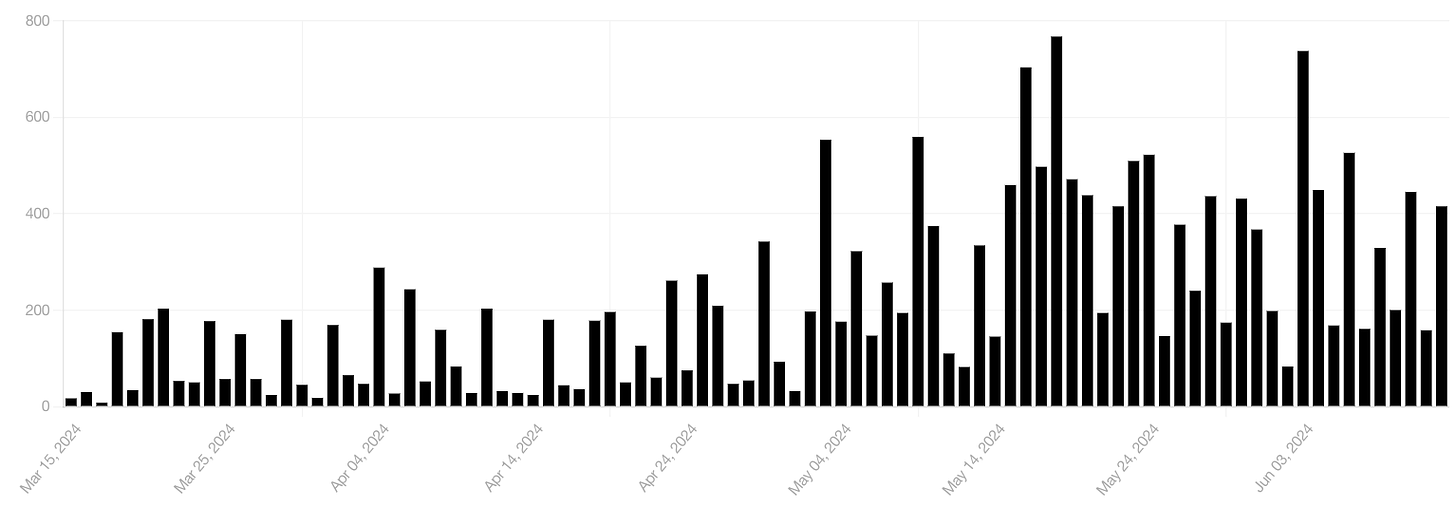

The one bummer for this period is that I did lose one paid subscriber. When you don’t have very many, this is unfortunate, but as the old saying goes, you can’t keep everyone happy - although I do try pretty hard. Looking at the total views over the past 90 days:

You can see that the daily averages have definitely increased. Like most newbies on Substack, I am kicking myself for not having started earlier on this platform (like years ago). More subscribers means more views, means more potential paid customers. I am looking forward to going over 500 in the next 2 weeks. Heck, why not even 600!

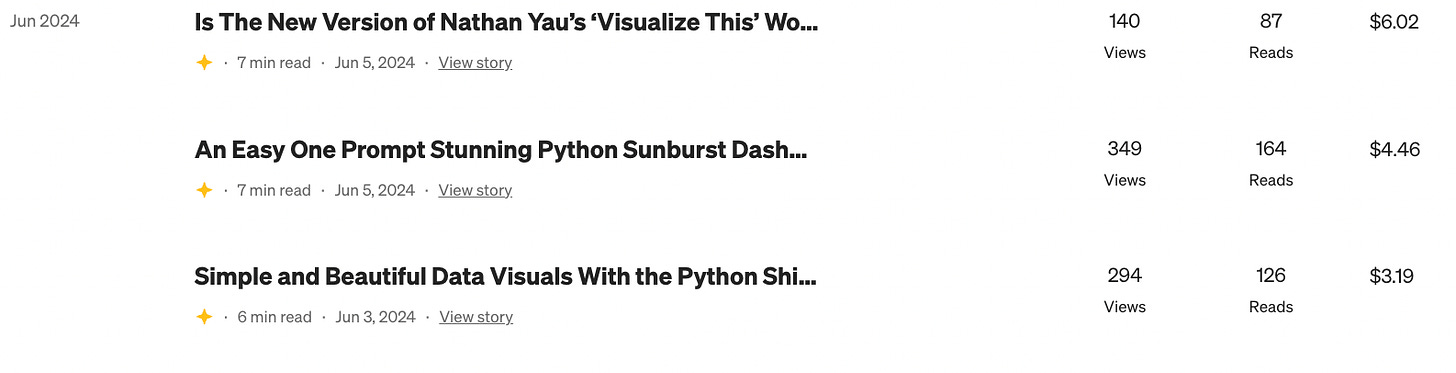

My Medium:

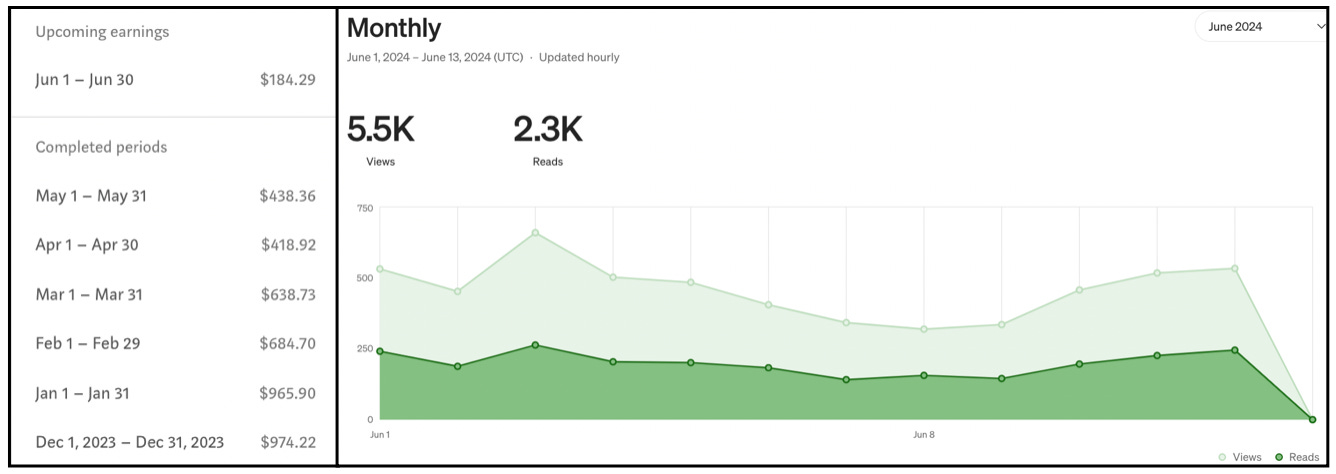

My Medium stats for the past 2 weeks have been well, about average - and with a reduction in articles, this is no surprise. So far for June:

It looks like I am on about the same pace as the previous two months. The interesting thing this month is that because I’ve been focusing on Substack, I’ve only posted 3 articles on Medium. So this almost feels like free money. I have a few more articles in the queue so I’m hoping to go over the $500 barrier this month.

My LinkedIn:

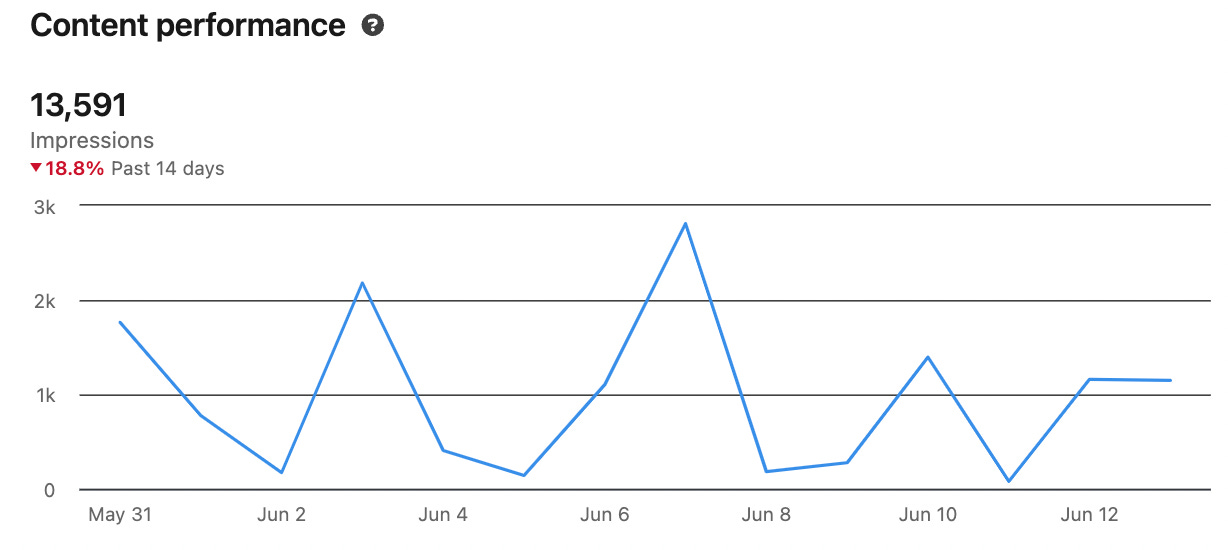

I have had middling success here in the past 2 weeks. By the numbers, 2 articles garnered some decent interest:

This is around about my average over the past 6 months. Considering I spend little to no time on LinkedIn, it is worth the few minutes it takes to write a short intro for each article and to post it.

The Python Web Developers group on LinkedIn is still the group for me that gets the most action. I’m usually somewhere between 2,000 and 4,000 impressions and 10-15 likes for each article that I post here.

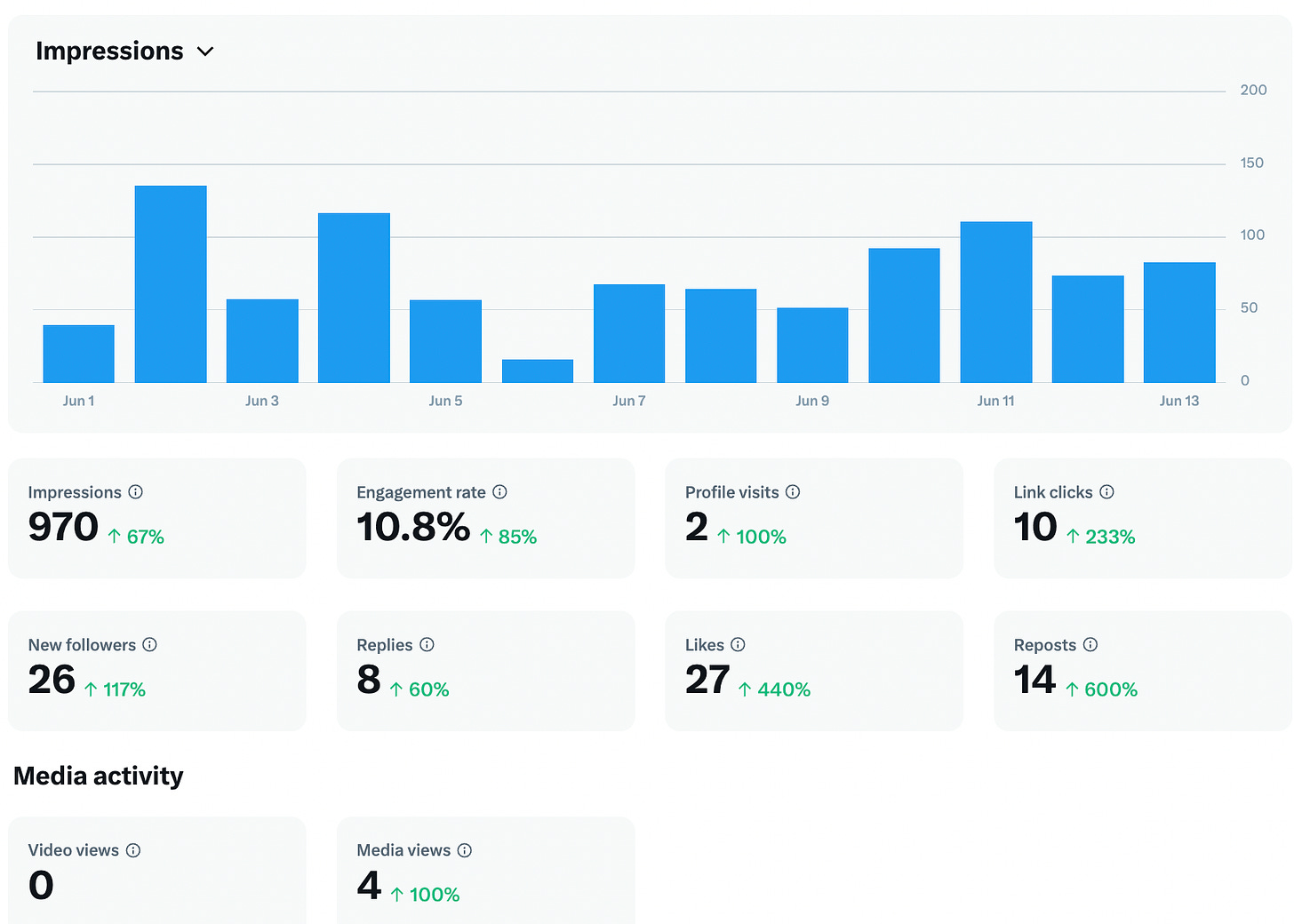

Twitter/X:

Well, I have had an interesting few weeks on X. I had mentioned that I would pretty much dump this platform - and I pretty much have. But I have had a number of larger accounts follow me in the past 2 weeks - folks who have upwards of 20K followers. This has piqued my attention. Overall for June:

Yes, my statistics are pretty sad. I am in the high 500s now for total followers but as I post only my articles, I just am not generating much traffic. I am still trying to figure out how to best integrate my overall writing with generating useful posts here - using very little of my valuable time.

Reddit:

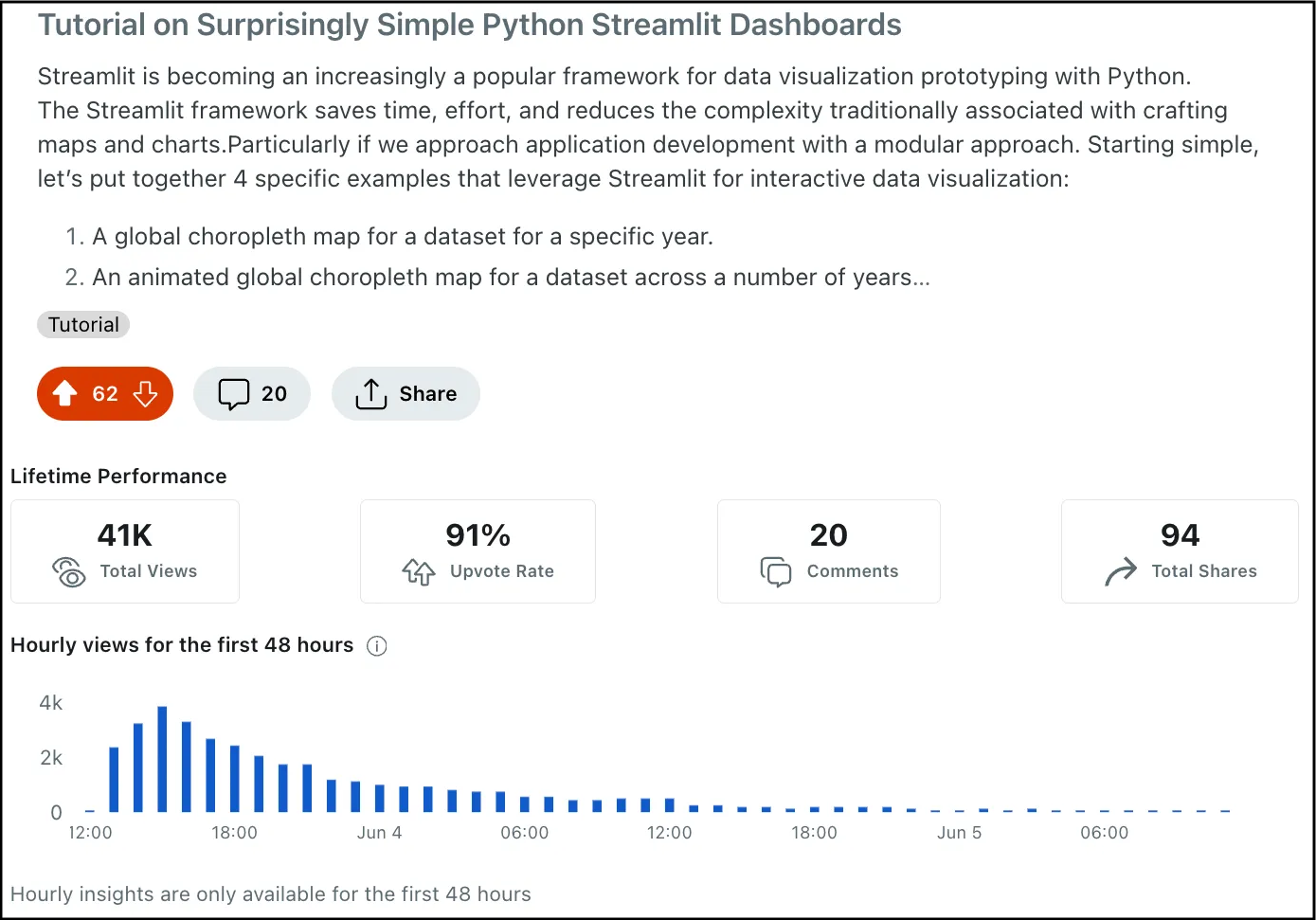

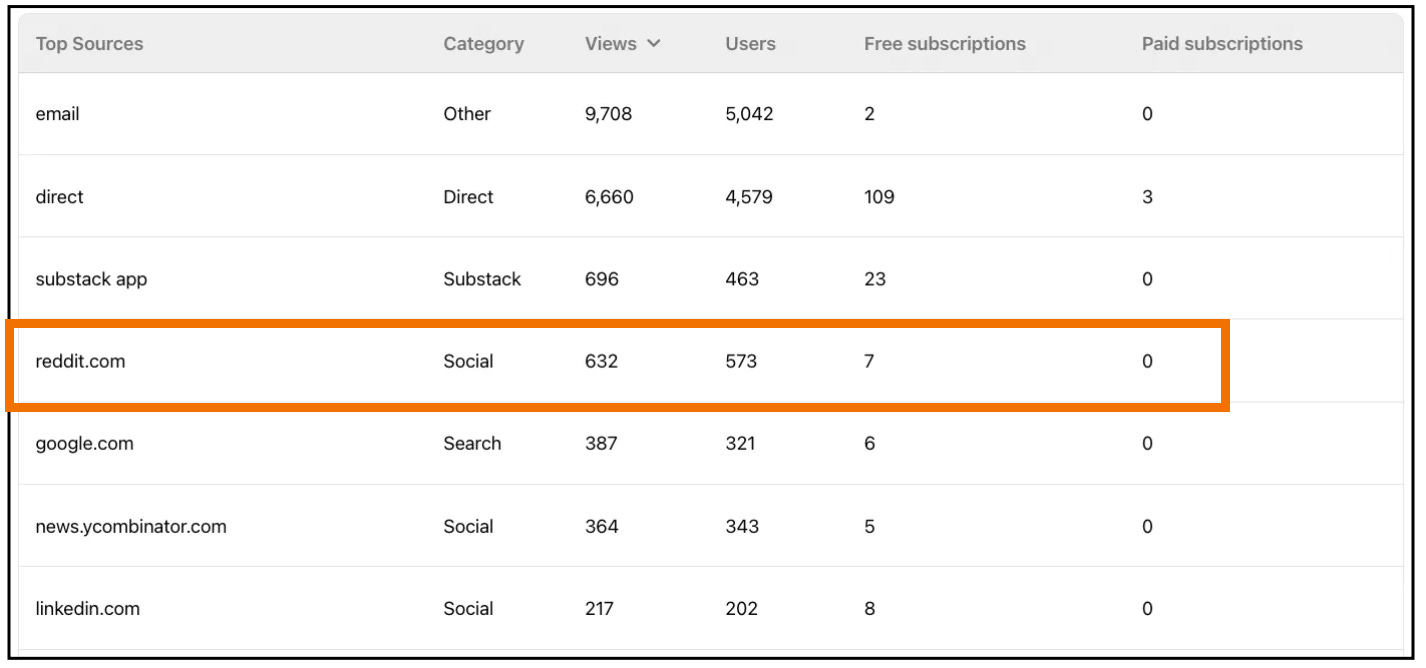

I have been posting the occasional article on Reddit to see if there is any interest there. I carefully post during the times allowed for tutorials. And I always post as a free article. I had one article do very well in this past 2 weeks:

This has been driving some traffic and a few subscriptions my way, which is nice. And it only takes me 30 seconds to copy/paste the post I’ve set up for LinkedIn over into reddit.

You can see from the above image that I really am getting some traffic - even more than from LinkedIn (which was surprising to me).

2. Thinking, Creating, and Doing

These past two weeks for me have been incredibly busy. With my wife away on a business trip to Budapest for a full week, my responsibilities at home were definitely elevated. So goes the life of a part-time creator.

Sometimes things have to give - and as my number 1 part-time creator priority is Substack, some other list items get pushed down.

As such, I have only managed to publish 3 articles on Medium so far this month:

I do have 3 more lined up in the publishing queue and I am hoping they will be accepted soon. The past 2 days, the Book review article on Nathan Yau’s new version of Visualize This has started to pick up steam. Yesterday alone I had 57 member views and 50 reads. That is a crazy-high percentage for Medium. I am hoping that maybe it will get picked up for a Boost? We will see.

The next two weeks will be extremely busy with all of the end-of-school celebrations. My daughter is moving to the “big kids” school next year and is excited about that. And the day after school ends, we are on a plane back to the USA to drop her off at a 7-week summer camp in New York State. This requires a lot of up-front planning and organizations. She spends the entire time sleeping in a canvas tent.

As this has been on my mind recently, I wrote an article (in my other Substack) about how sending her to camp fits into our lives:

It is really hard to have her away from us for these long periods of time but it certainly gives both my wife and I more time to focus on our own projects. So both sad, and happy!

So for the next two weeks my focus will be:

Continued outreach and engagement on Substack (of course!).

Article idea generation - I have been struggling here. I have some articles queued up to read and review on how to revitalize this process

Creating a book chapter a week (4 down so far!)

Another kick at X (I plan to spend a full day setting up an automated approach)

With item #2 that I plan on stepping back a bit to re-invigorate the article idea generation process. I’ve felt a bit stagnant lately. Sometimes ideas just flow and I have too many ideas - lately it’s been a bit of the opposite. I am hoping some focused time here will give me a stepping stone across this river.

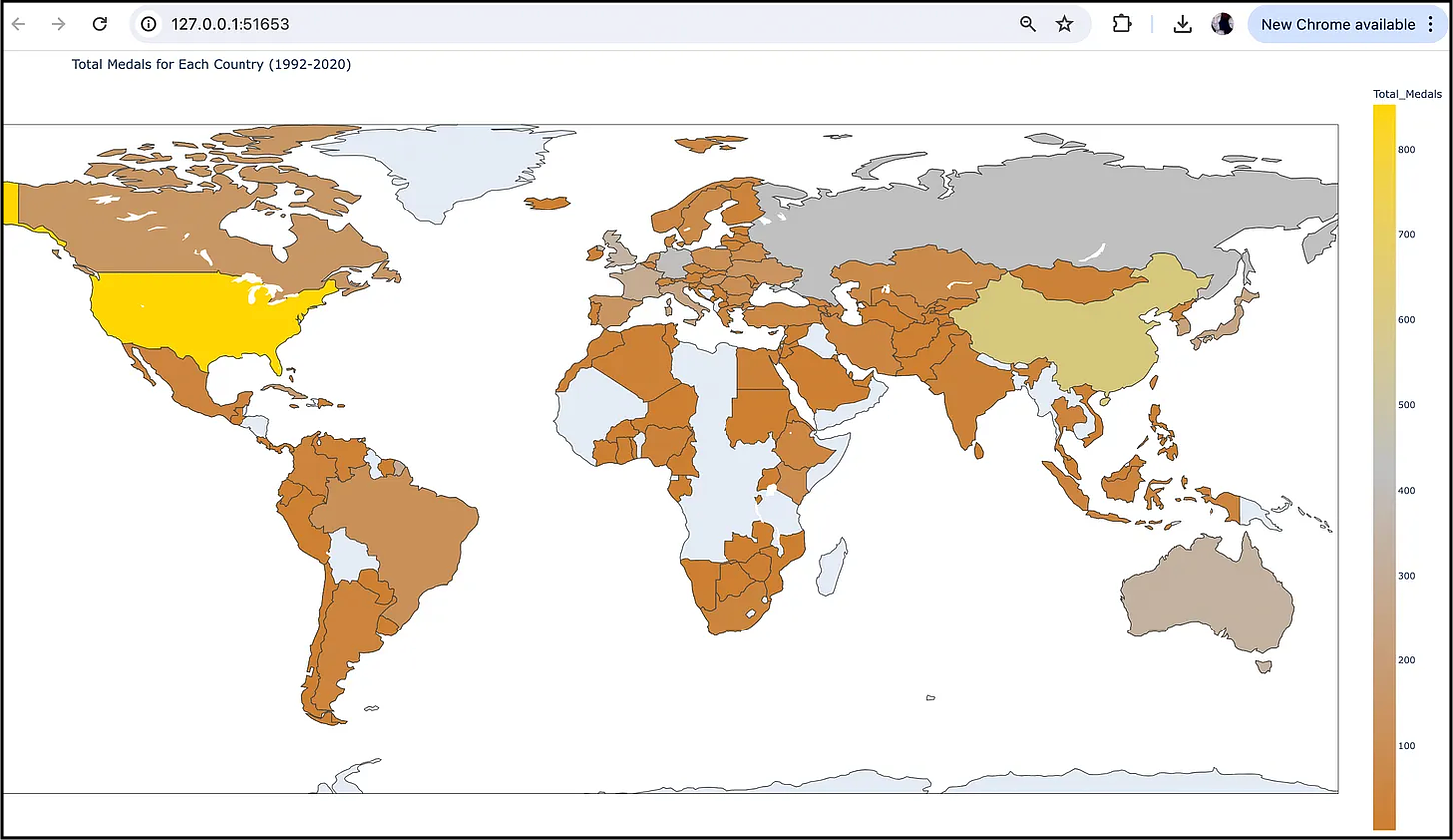

3. Tutorial - Medal-worthy Olympic data visuals with modular prompting

With the 2024 Paris Olympics just down the road, it’s a good time to break out historical global medal counts for each nation.

With modular prompting, GPT-4 can use the Python Ploty library to create a beautiful global choropleth map

How? Using well-formulated modular prompts.

Let me show the modular steps to a global choropleth map showcasing summer Olympic medal winners.

The Dataset

The data used to accomplish this task is the “Olympic Medals by Country” dataset. It is available on Kaggle, HERE.

In this dataset, the data is organized by year, country, and a count of “Gold”, “Silver” and “Bronze” medals. For the sake of a more “complete” set of data points, we will just extract the totals since 1992 (when all Eastern European countries joined independently).

Step 1: Load and preprocess the dataset

First, we need to load the dataset from the “Country_Medals.csv” file and preprocess it.

We will replace any instance of “United States” with “United States of America” in the ‘Country_Name’ column.

And interestingly enough, the separator in this dataset is a semi-colon “;” rather than a comma — so let’s make sure we let GPT4 know about this as well.

First, let’s upload the file to the GPT-4 window:

Then, we can prompt GPT-4 to set up our data frame.

Prompt text to ChatGPT-4: Please provide a Python code snippet to load the dataset from the attached CSV file using pandas, and replace “United States” with “United States of America” in the ‘Country_Name’ column. Then filter the dataset to include only the rows with years between 1992 and 2020, inclusive.

Response from ChatGPT-4:

import pandas as pd

# Load the dataset

df = pd.read_csv('Summer_olympic_Medals.csv', sep=',')

# Replace "United States" with "United States of America" in the 'Country_Name' column

df['Country_Name'] = df['Country_Name'].replace('United States', 'United States of America')

# Filter the dataset for the years 1992 to 2020, inclusive

df_filtered = df[(df['Year'] >= 1992) & (df['Year'] <= 2020)]Our prompt must change the name of the United States in our data set to ensure we can extract the data correctly.

Step 2: Create a Choropleth Map Visualization

Next, we create a map visualization using Plotly to display the total medals by country from 1992 to 2020.

In order to display the results by country on a map, we need a second file called ‘world-countries.json’ file. This file is used to define country boundaries. You can download a copy of this file HERE.

Make sure this file is accessible to your Python code (i.e., Include the file in the same directory as your Python file).

Now we are ready to prompt for our map.

Prompt text to ChatGPT-4: Using the filtering code from the previous step, please provide a Python code snippet to create a Plotly choropleth map visualization displaying the ‘Total Medals’ for each country from 1992–2020.

Use the file world-countries.json to define country boundaries

The Total Medals are calculated by adding up the Gold, Silver, and Bronze values for each country.

Use the file ‘world-countries.json’ to set country boundaries

Use a gold/silver/bronze custom color scheme

Ensure all country boundaries are included and countries without medals are white

Response from ChatGPT-4: Certainly, here is the Python code:

import pandas as pd

import plotly.express as px

import json

# Load the dataset

file_path = 'Summer_olympic_Medals.csv'

df = pd.read_csv(file_path)

# Replace "United States" with "United States of America" in the 'Country_Name' column

df['Country_Name'] = df['Country_Name'].replace("United States", "United States of America")

# Filter the dataset to include only the rows with years between 1992 and 2020, inclusive

filtered_df = df[(df['Year'] >= 1992) & (df['Year'] <= 2020)].copy()

# Calculate the total medals for each country

filtered_df.loc[:, 'Total_Medals'] = filtered_df['Gold'] + filtered_df['Silver'] + filtered_df['Bronze']

total_medals = filtered_df.groupby('Country_Name')['Total_Medals'].sum().reset_index()

# Load the geojson file

geojson_file_path = 'world-countries.json'

with open(geojson_file_path) as geojson_file:

geojson_data = json.load(geojson_file)

# Define custom color scale

custom_color_scale = [

[0, "rgb(255, 255, 255)"], # No medals

[0.00001, "rgb(205, 127, 50)"], # Bronze

[0.5, "rgb(192, 192, 192)"], # Silver

[1, "rgb(255, 215, 0)"] # Gold

]

# Add countries with zero medals to ensure they are displayed in white

all_countries = pd.DataFrame({"Country_Name": [feature['properties']['name'] for feature in geojson_data['features']]})

total_medals = pd.merge(all_countries, total_medals, on="Country_Name", how="left").fillna(0)

# Create a Plotly choropleth map

fig = px.choropleth(

total_medals,

locations='Country_Name',

locationmode='country names',

color='Total_Medals',

hover_name='Country_Name',

color_continuous_scale=custom_color_scale,

title='Total Medals for Each Country (1992-2020)',

geojson=geojson_data,

featureidkey="properties.name",

scope='world'

)

# Update layout for better visualization

fig.update_geos(fitbounds="locations", visible=True, showcountries=True, countrycolor="black")

fig.update_layout(margin={"r":0,"t":30,"l":0,"b":0})

# Show the figure

fig.show()For this code snippet, after the data frame manipulation, the GeoJSON file ‘world-countries.json’ is loaded. This file contains geographical data (ie. country boundaries) for the entire globe.

Next, a data frame countries_df is created from the 'name' properties in the GeoJSON data and is merged with the total medals data frame on 'Country_Name', and any missing values are replaced with 0 (to ensure all countries are populated).

Next, GPT-4 helps us to create a custom color scale to match our global medals theme (using gold/silver/bronze).

All countries that have not won medals during this time period are then set to be white.

Lastly, a choropleth map is created using Plotly Express, mapping total medals to countries, with a custom color scale (rather than the super-ugly default “Viridis” color scheme).

This gives us the choropleth map (heat map) that displays the total medals for each country for the time period specified:

A beautiful themed map showing total medals by country!

Way easier than even I expected when I decided to try this out.

A few other changes you may want to prompt for include

setting a display ratio for height and width on the map

making the chart sizes adjustable

adding in a slider to display by year

These are exercises best left for another article.

Lastly, just keep in mind that GPT-4 is not always consistent in its code responses.

This is why the modular approach is so important. You can easily go back a step if you need to and re-prompt until you get the response you expect.

And that’s all for this newsletter folks!

Thank you for reading!

Maybe it will help ☺️ with analysis why people don’t convert to paid - because I’m a bit confused about what is offered to paid subscribers.

Is the subscription just for donation-based support, or are there exclusive articles that aren’t available for free here or on Medium? I’m a paid user on Medium, and it seems like the same articles are posted on both platforms.